A scalable crawler framework. It covers the whole lifecycle of crawler: downloading, url management, content extraction and persistent. It can simplify the development of a specific crawler.

- Simple core with high flexibility.

- Simple API for html extracting.

- Annotation with POJO to customize a crawler, no configuration.

- Multi-thread and Distribution support.

- Easy to be integrated.

Add dependencies to your pom.xml:

<dependency> <groupId>us.codecraft</groupId> <artifactId>webmagic-core</artifactId> <version>0.3.2</version> </dependency> <dependency> <groupId>us.codecraft</groupId> <artifactId>webmagic-extension</artifactId> <version>0.3.2</version> </dependency> Write a class implements PageProcessor:

publicclassOschinaBlogPageProcesserimplementsPageProcessor{privateSitesite = Site.me().setDomain("my.oschina.net") .addStartUrl("http://my.oschina.net/flashsword/blog"); @Overridepublicvoidprocess(Pagepage){List<String> links = page.getHtml().links().regex("http://my\\.oschina\\.net/flashsword/blog/\\d+").all(); page.addTargetRequests(links); page.putField("title", page.getHtml().xpath("//div[@class='BlogEntity']/div[@class='BlogTitle']/h1").toString()); page.putField("content", page.getHtml().$("div.content").toString()); page.putField("tags",page.getHtml().xpath("//div[@class='BlogTags']/a/text()").all())} @OverridepublicSitegetSite(){returnsite} publicstaticvoidmain(String[] args){Spider.create(newOschinaBlogPageProcesser()) .pipeline(newConsolePipeline()).run()} }page.addTargetRequests(links)Add urls for crawling.

You can also use annotation way:

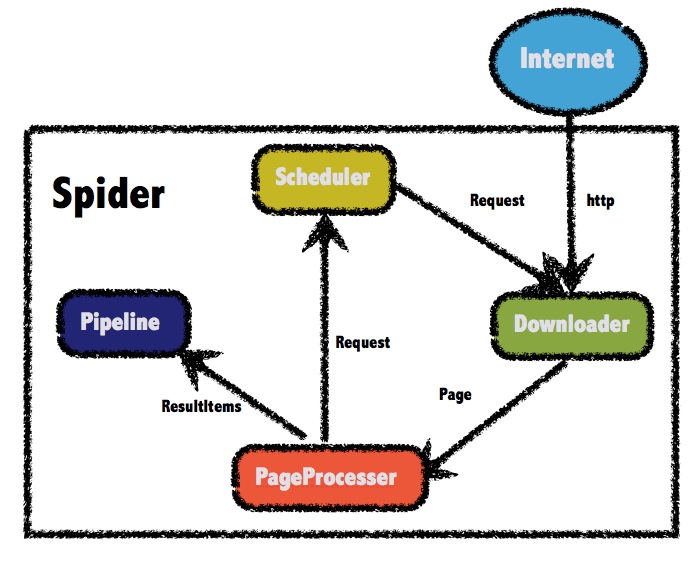

@TargetUrl("http://my.oschina.net/flashsword/blog/\\d+") publicclassOschinaBlog{@ExtractBy("//title") privateStringtitle; @ExtractBy(value = "div.BlogContent",type = ExtractBy.Type.Css) privateStringcontent; @ExtractBy(value = "//div[@class='BlogTags']/a/text()", multi = true) privateList<String> tags; publicstaticvoidmain(String[] args){OOSpider.create( Site.me().addStartUrl("http://my.oschina.net/flashsword/blog"), newConsolePageModelPipeline(), OschinaBlog.class).run()} }The architecture of webmagic (refered to Scrapy)

Javadocs: http://code4craft.github.io/webmagic/docs/en/

There are some samples in webmagic-samples package.

Lisenced under Apache 2.0 lisence

To write webmagic, I refered to the projects below :

Scrapy

A crawler framework in Python.

Spiderman

Another crawler framework in Java.